Normal Distribution Demystified

Understanding the Maximum Entropy Principle

Have you ever wondered why we often use the normal distribution?

How do we derive it anyway?

Why do many probability distributions have the exponential term?

Are they related to each other?

If any of the above questions make you wonder, you are in the right place.

I will demystify it for you.

1 Fine or Not Fine

Suppose we want to predict if the weather of a place is fine or not.

However, we have no information about the place.

We don’t know if it’s in Japan, the US, or a country on a planet in a galaxy far, far away.

How can we develop a probability distribution model to predict the probability of “Fine”?

We don’t want to introduce bias or assumption into our weather model since we know nothing about the weather.

We want to be honest.

So, our model should reflect that we are entirely ignorant about the weather.

What kind of probability distribution should we use?

Should we use the normal distribution?

Not really — we don’t have the mean and standard deviation of the weather, so we cannot use the normal distribution.

As we don’t know anything, we can only randomly guess, can’t we?

That’d be like a coin flip, right?

2 Maximum Ignorance and Honesty

Given that we have no information, our most unassuming model is a coin flip.

So, it’s the uniform distribution.

Although this keeps us honest, it would not be a very accurate weather model because the uniform distribution is entirely ignorant.

We could use a different probability distribution if we have some weather information.

For example, if we know the mean and standard deviation, we could use the normal distribution, which would be more beneficial than random guesses by the uniform distribution.

In other words, when we use a particular probability distribution (other than the uniform distribution) for the weather, we claim that we have some information about the weather.

If we claim that we have some information without proof or statistics, we would be dishonest, and no one should ever believe our predictions.

So, we should use a distribution expressing what we know and don’t know.

By using the uniform distribution, we tell the world that we are maximally ignorant about the weather and we are sincere.

The question is how to express what we’ve said in mathematics.

That is where the concept of information entropy comes in.

3 Maximum Entropy Distribution with no Information

The information entropy is the measure of uncertainty.

Higher entropy means that we are less sure about what will happen next.

As such, we should maximize the entropy of our probability distribution as long as all required conditions (constraints) are satisfied.

This way, we remove any unsupported assumptions from our distribution and keep ourselves honest.

Let’s look at an example by maximizing the entropy of our weather model.

Below is the entropy of our weather model.

We know nothing about the probability distribution of the weather except that the total probability must sum up to 1.

It is the only constraint since we know nothing about the weather distribution.

We will maximize the entropy of the probability distribution while satisfying the constraint.

It is a constrained optimization that we can solve using the Lagrange multiplier.

Let’s define the Lagrangian using the Lagrange multiplier

We find the maximum of

The gradient of

Here,

So, we have two equations to solve.

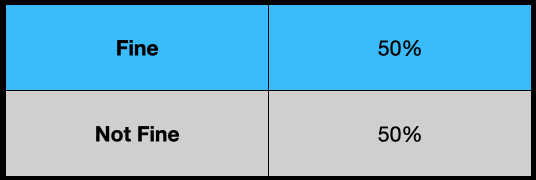

Solving these equations, we know both probabilities are the same.

We can use the constraint to eliminate the Lagrange multiplier

The “Fine” and “Not Fine” probabilities are both 0.5.

So, our model (the probability distribution) of the weather is a uniform distribution.

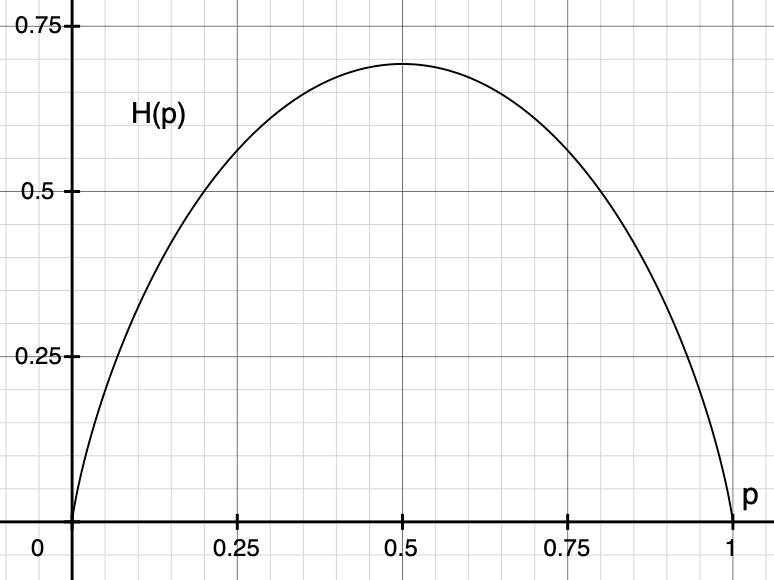

Let’s plot the entropy and visually confirm that

The diagram below shows that our probability distribution’s entropy becomes the maximum at

In summary, we have derived the uniform distribution by maximizing the entropy of our probability distribution for which we have no specific information.

We call the approach the principle of maximum entropy which states the following:

The probability distribution which best represents the current state of knowledge is the one with the largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information). Principle of maximum entropy (Wikipedia)

In slightly more plain English, if we maximize the entropy of our distribution while satisfying all constraints we know, the probability distribution becomes the honest representation of our knowledge.

4 Many Discrete Outcomes

We can apply the maximum entropy principle to the probability distribution with more outcomes.

Let’s extend our model to

Like the previous section, we calculate the partial derivative of

Solving the above for

Once again, all

There are

As such, the uniform distribution is given below:

5 Continuous Outcomes

Let’s apply the principle of maximum entropy to a continuous distribution.

Let’s say

As before, we can define the sum of total probability, the entropy, and the Lagrange function.

We want to know what kind of

However,

We can optimize a functional using the Euler-Lagrange equation from the calculus of variations.

In calculus of variations, we optimize the following form of functionals using the Euler-Lagrange equation.

The square brackets mean

All you need to do is to solve the following Euler-Lagrange equation to find the optimal function

So, let’s put our Lagrangian in the same form.

The last term

We could ignore the last term in this example since we will calculate the partial derivative by

Let’s put the contents of the curly brackets as

We are ready to solve the Euler-Lagrange equation for

We can ignore the second term as there is no

Therefore, we are solving the following equation:

As a result, we obtain the following:

Using the sum of probability, we eliminate

Once again, the maximum entropy distribution without any extra information is the uniform distribution.

But the uniform distribution is not that useful for predicting the temperature.

We need to incorporate more information to have a better weather forecast model.

6 The Maximum Entropy Distribution with the Mean and Standard Deviation

If we know the mean and standard deviation of the temperature, what would be the maximum entropy distribution?

You’ve probably guessed it — it’s the normal distribution.

Let’s do some math exercises. The formulas for the total probability and the entropy remain the same as before.

This time, we know more information about the distribution. Namely, the mean and standard deviation:

We can use them as additional constraints while maximizing the entropy. For each constraint, we add a Lagrange multiplier.

The last term includes both the mean and standard deviation in the constraint.

The Lagrangian functional is as follows:

This time, I ignored the terms that will become zero due to the partial derivative by

So, we will apply the Euler-Lagrange equation to the following:

As a reminder, this is the Euler-Lagrange equation.

The second term is zero since we do not have

So, we only calculate the partial derivative of

Then, we ensure

The total probability must add up to 1.

We define a new variable

So,

We substitute

The last integral is the Gaussian integral, giving the square root of

So, we have the following equation:

Therefore, we have the relationship between

The standard deviation can be calculated as follows:

We can eliminate

Once again, we use the following substitution:

So, the standard deviation formula can be simplified:

The integral can be calculated using the integral by parts along with the Gaussian integral.

The first term becomes zero as the exponential of

The second term is the Gaussian integral (the square root of

All in all:

As a result,

Also,

Finally,

We see that the normal distribution is the maximum entropy distribution when we only know the mean and standard deviation of the data set.

It makes sense why people often use the normal distribution as it is pretty easy to estimate the mean and standard deviation of any data set given enough samples.

That doesn’t mean that the true probability distribution is the normal distribution. It just means that our model (probability distribution) reflects what we know and don’t — the mean, standard deviation, and nothing else.

If the normal distribution can explain the real data well, likely, the true distribution does not have any more specific conditions. For example, we may be dealing with noise or errors.

Suppose the normal distribution doesn’t explain the real data well. In that case, we may need to find out more specific information about the distribution so that we can incorporate it into our model, which may not be so easy depending on the data set you are dealing with.

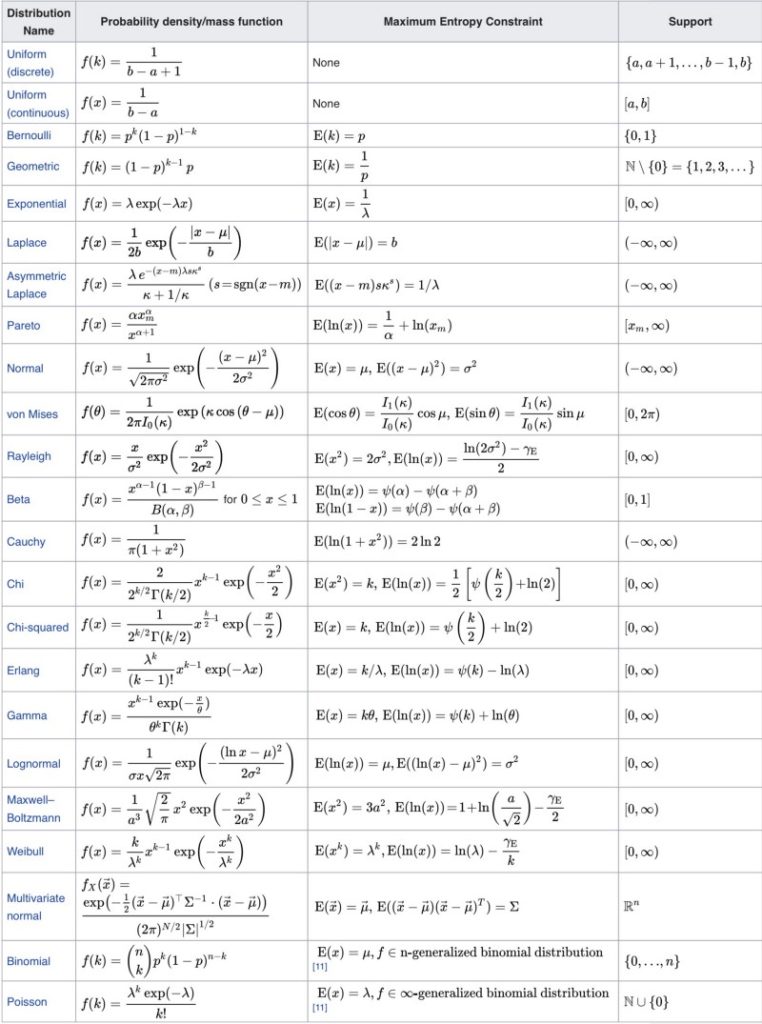

So, what other distributions can we derive using the maximum entropy principle?

7 Maximum Entropy Probability Distributions

It turns out there are many such distributions.

Wikipedia has a table of maximum entropy probability distributions and corresponding maximum entropy constraints.

Many distributions have the exponential term because the entropy maximization process may or may not eliminate the exponential.

8 Regularization with the Maximum Entropy

In deep learning, you might have seen that some loss function includes negative entropy. As the loss function is minimized, the entropy is maximized.

Adding the maximum entropy term in the loss function helps to avoid unsupported assumptions creep in, so it’s a form of regularization.

9 Final Thoughts…

Once upon a time, when I was studying the subject of probability and statistics, our teacher told us to get used to the normal distribution.

We learned how to use the Normal distribution table, how to interpret the standard deviation, and other stuff.

Eventually, it became second nature, and I stopped asking myself why the math looked so weird.

I bet many students did the same, and their life also went on.

Later in life, I encountered the maximum entropy concept while reading a reinforcement learning paper.

So, I went down the rabbit hole and found the wonderland where all those weird probability distribution formulas no longer look weird.

I hope you are in the wonderland now.